Research Programs

OUR APPROACH

We convene extraordinary minds to address the most important questions facing science and humanity.

CIFAR's research programs bring together international, interdisciplinary researchers who work together for five-year terms. Programs are led by a director or two co-directors, engage approximately 20-40 fellows and advisors from around the world, and include two or three CIFAR Azrieli Global Scholars for two-year terms.

CIFAR research programs create impact beyond academia through a highly collaborative strategy. Target areas for impact emerge from the program’s core research agenda, and the strategy is informed by long-term, iterative exchange of ideas and perspectives between program members and non-academic stakeholders.

IMPACT CLUSTERS

CIFAR’s research programs are organized into 5 distinct Impact Clusters that address significant global issues and are committed to fostering an environment in which breakthroughs emerge.

Each cluster bridges CIFAR’s diverse global community by looking ahead to the challenges on the horizon, encouraging scholars from across disciplines to work collaboratively towards a bold and innovative future. Research programs may span multiple clusters depending on the nature and goals of their work.

Current research programs

2019, 2026

Boundaries, Membership & Belonging

Is it possible to have a world without “us” and “them”?

2003, 2007, 2012, 2019, 2025

Child & Brain Development

How do childhood experiences affect lifelong health?

2019, 2026

Earth 4D: Subsurface Science & Exploration

How do life, water and energy interact between the Earth’s vast subsurface and surface environments?

2014, 2026, 2020

Humans & the Microbiome

How do microbes that live in and on us affect our health, development and even behaviour?

2004, 2008, 2014, 2019, 2025

Learning in Machines & Brains

How do we understand intelligence and build intelligent machines?

1987, 1992, 1997, 2002, 2007, 2012, 2019, 2025

Quantum Materials

How could quantum materials transform our society?

Programs that are completing their work

1986, 1991, 1996, 2001, 2006, 2011, 2016, 2023

Gravity & the Extreme Universe

What is extreme gravity, and how can it help us understand the origin of the universe?

2002, 2007, 2012, 2019

Quantum Information Science

How do we harness the power of quantum mechanics to improve information processing?

Recently completed programs

2005, 2010, 2016

Genetic Networks

How do the interactions among genes influence health and development?

2014

Molecular Architecture of Life

How does life originate and what are the processes that make life possible?

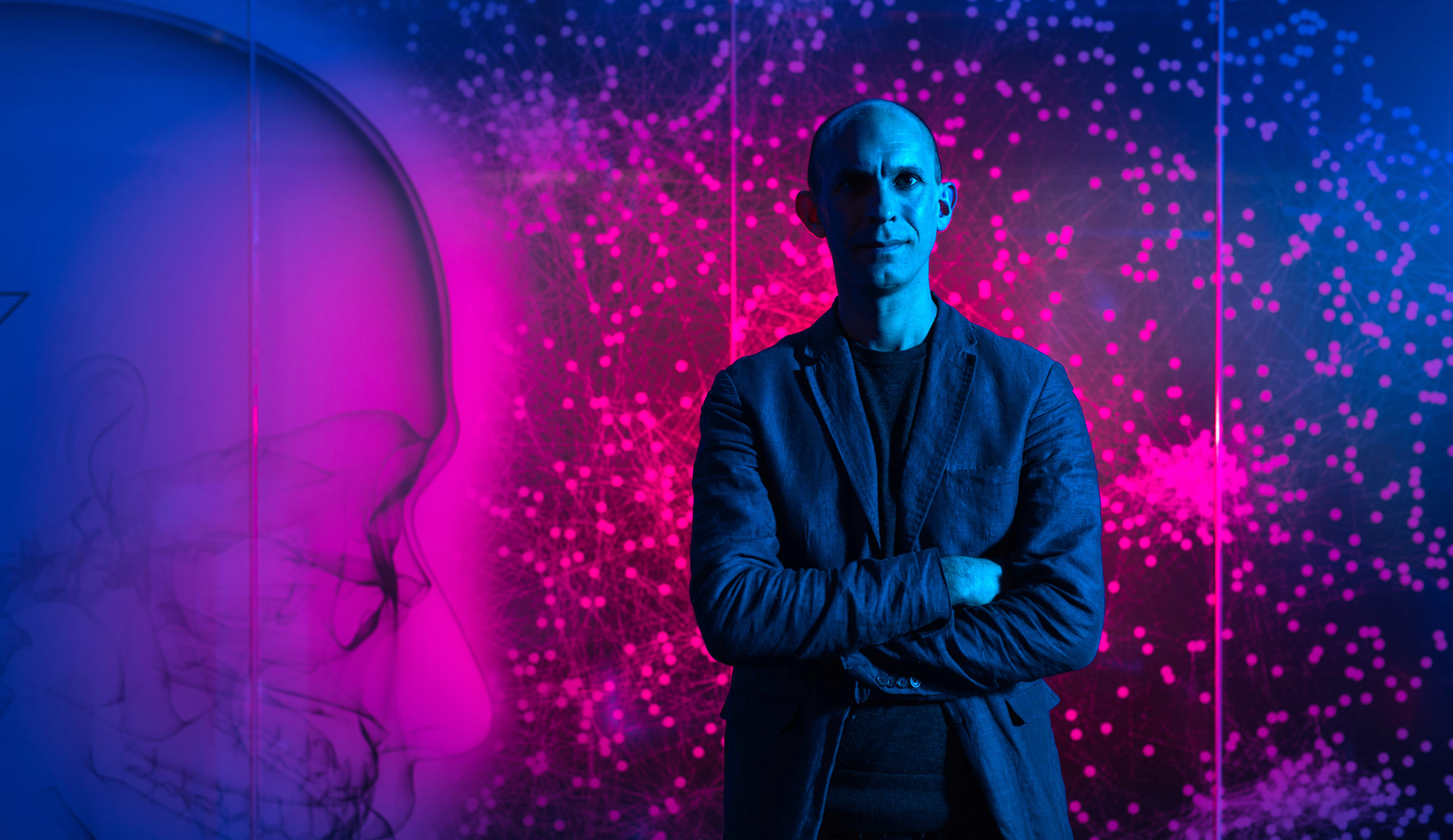

OUR FELLOWS

CIFAR fellows are collaborative researchers at the top of their fields.

Our community of fellows, advisors, CIFAR Azrieli Global Scholars, and Canada CIFAR AI Chairs includes more than 400 researchers from 18 countries. They regularly contribute to papers in the top 1% of most-cited papers worldwide. Fellows are selected for academic and communication excellence, and for how their expertise fits into the goals of a program.

CATALYST FUND

We encourage our community of researchers to work across programs and disciplines.

Through Catalyst Funds, we facilitate and support high-risk ideas and projects within and across CIFAR’s portfolio of research programs. The time-limited grants provide flexibility for early-stage projects, encourage interdisciplinary collaborations, and address emerging and exploratory themes within or between research programs.